Welcome to the DSI Newsletter

Our newsletter is a compendium of breaking news, the latest research, outreach efforts, and more.

Gradient-Free Deep Learning for Large-Scale Problems

Zeroth-order (ZO) optimization algorithms enable the training of non-differentiable machine learning (ML) pipelines by iteratively estimating the gradient (i.e., the slope of a function) and updating the solution accordingly. This type of optimization has proved useful for relatively small-scale ML problems, but effectively scaling ZO algorithms to train deep neural networks can come at the expense of solution accuracy.

Developed by LLNL researchers James Diffenderfer, Bhavya Kailkhura, and Konstantinos Parasyris along with colleagues from Michigan State University and the University of...

Register for WiDS Livermore by March 1

You can still sign up for the Lab’s Women in Data Science (WiDS) conference taking place on Wednesday, March 13. This hybrid event is free and open to everyone—inside or outside the Lab, any career level, and data science experience level. The registration link and other details are posted at data-science.llnl.gov/wids.

- Register by March 1

- Hosted at the University of California Livermore Collaboration Center (UCLCC) and virtually

- Sponsored by LLNL’s Data Science Institute; Computing Principal Directorate; and Office of Inclusion, Diversity, Equity, and Accountability

- This regional...

New Year, New Directions

Happy new year from the DSI! Although a new calendar year has begun, LLNL is already a quarter of the way into Fiscal Year 2024. Each FY brings new goals, challenges, and opportunities to all areas of the Lab, including the DSI. With increased emphasis and prioritization of data science, particularly artificial intelligence (AI) and machine learning (ML), at the national level, the U.S. Department of Energy and LLNL recognize the importance of investing in projects and programs that harness these technologies safely and securely.

Our community has grown inside and outside the Lab—this...

White House Executive Order: Making AI Work for the American People

On October 30, the White House announced a new Executive Order (EO) that “establishes new standards for AI [artificial intelligence] safety and security, protects Americans’ privacy, advances equity and civil rights, stands up for consumers and workers, promotes innovation and competition, advances American leadership around the world, and more.”

The Department of Energy (DOE), with input from national labs, is in the process of responding to the EO on multiple fronts. Representatives from the DSI and AI Innovation Incubator (AI3) are working closely with LLNL leadership to provide the DOE...

Highlights from the Leadership Retreat

In September, the DSI leadership team gathered for a two-day retreat to discuss goals, strategies, and activities for the next several years. The team consists of the director, deputy director (see next story), the council, directors of both student programs, data science ambassadors, and administrative and communication leads. As the hub of the Lab’s data science community, the DSI must evolve as LLNL’s mission space and the field evolve.

Some of the DSI’s plans for the new fiscal year, which began on October 1, include refining the Data Science Summer Institute and Data Science Challenge...

Data Science Challenge Tackles ML-Assisted Heart Modeling

For the first time, students from the University of California’s Merced and Riverside campuses joined forces for the two-week Data Science Challenge at LLNL, tackling a real-world problem in machine learning (ML)-assisted heart modeling. Held during July 10–21, the event brought together 35 UC students—ranging from undergraduates to graduate-level students from a diversity of majors—to work in groups to solve four key tasks, using actual electrocardiogram data to predict heart health. According to organizers, the purpose of the challenge was to give students a taste of the broad scope of work...

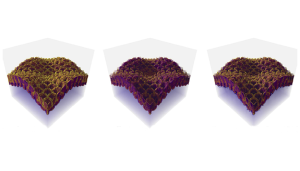

Open Data Initiative Adds CFD Simulation Dataset

The DSI’s Open Data Initiative (ODI) recently added a new project to the catalog: Computational Fluid Dynamics Simulation Data of Spatial Deposition. In fluid mechanics problems, computational fluid dynamics (CFD) uses data structures and numerical analysis to investigate the flow of liquids and gases. For instance, CFD models can simulate atmospheric transport and dispersion, as in this dataset’s simulations of wind-driven pollutant dispersion and deposition. The data is then used to train machine learning models that, in turn, can predict spatial patterns with high accuracy.

This new...

The DSI Turns Five!

Since the DSI’s founding in 2018, the Lab has seen tremendous growth in its data science community and has invested heavily in related research. Five years later, the DSI has found its stride with a multipronged strategy of raising awareness about the field, encouraging partnerships across the community, supporting researchers, and nurturing the next generation of data scientists. A new article chronicles the DSI’s successes and influence both at the Lab and in the external data science community.

Michael Goldman, who directed the DSI until this April, says, “The accomplishments of DSI are...

Brian Giera Named New DSI Director

After five years as the DSI’s director, Michael Goldman is passing the baton to Brian Giera, a materials and manufacturing researcher in LLNL’s Engineering Directorate. “The DSI is a thriving organization, so I am excited for the impact we will have given all the positive momentum,” says Giera. Goldman adds, “Brian will lead the DSI into a promising new phase, given how tremendously the Lab’s workforce and capabilities have grown since we established the DSI in 2018.”

Giera joined LLNL in 2014 as a postdoctoral researcher and currently leads the Analytics for Advanced Manufacturing group in...

Register for Hybrid WiDS Livermore on March 8

The annual Women in Data Science (WiDS) conference returns on Wednesday, March 8. LLNL will again host a regional event in conjunction with the worldwide conference. The all-day WiDS Livermore event is free and will be presented in a hybrid format at the Livermore Valley Open Campus (LVOC) and via WebEx. Everyone is welcome to attend. Register by February 27.

Along with plenty of food and networking opportunities, WiDS Livermore will include a livestream of the Stanford conference where LLNL WiDS Ambassador Marisa Torres has been invited to speak to the global audience. Returning this year is...

LLNL Achieves Fusion Ignition…with Help from Data Science

On December 13, the Department of Energy (DOE) and National Nuclear Security Administration (NNSA) announced the achievement of fusion ignition at LLNL—a major scientific breakthrough decades in the making that will pave the way for advancements in national defense and the future of clean power. In the early hours of December 5, a team at LLNL’s National Ignition Facility (NIF) conducted the first controlled fusion experiment in history to reach this milestone, also known as scientific energy breakeven, meaning it produced more energy from fusion than the laser energy used to drive it. This...

Award-Winning Papers

LLNL’s data science community continues to receive accolades for ground-breaking research and techniques. PDFs or full-text web pages are linked where available.

The 2022 IEEE VIS Test of Time Awards recognize papers that are “still vibrant and useful today and have had a major impact and influence within and beyond the visualization community” (read more at LLNL News). The conference is premier forum for advances in visualization and visual analytics.

- 25-year award (published in 1997): ROAMing Terrain: Real-Time Optimally Adapting Meshes – Mark Miller and collaborators

- 14-year award...

Leadership Changes with New Fiscal Year

Coinciding with LLNL’s new fiscal year (FY23) beginning on October 1, a few personnel changes took effect for the DSI and Data Science Summer Institute (DSSI). Dan Merl, who leads the Machine Intelligence Group in LLNL’s Center for Applied Scientific Computing, joined the DSI Council to advise on computing and data initiatives. Goran Konjevod, from LLNL’s Computational Engineering Division, moved from his DSSI directorship to the Council to further promote education and workforce initiatives. Statistician Amanda Muyskens joined Nisha Mulakken in co-directing the DSSI. (Read more about Muyskens...

Top AI Award at International Symbolic Regression Competition

An LLNL team claimed a top prize at an inaugural international symbolic regression competition for an artificial intelligence (AI) framework they developed capable of explaining and interpreting real-life COVID-19 data. Hosted by the open-source SRBench project at the 2022 Genetic and Evolutionary Computation Conference, the competition invited teams to submit their best symbolic regression algorithms. Organizers trained the models on datasets, assigned “trust ratings,” and evaluated them for accuracy and simplicity.

The team’s “Unified Deep Symbolic Regression” (uDSR) algorithm beat 12 other...

Open Data Initiative Adds Simulated Cardiac Signals Dataset

Building off LLNL’s Cardioid code, which simulates the electrophysiology of the human heart, a research team has conducted a computational study to generate a dataset of cardiac simulations at high spatiotemporal resolutions. The dataset—which is publicly available for further cardiac machine learning (ML) research via the DSI’s Open Data Initiative—was built using real cardiac bi-ventricular geometries and clinically inspired endocardial activation patterns under different physiological and pathophysiological conditions.

The dataset consists of pairs of computationally simulated intracardiac...

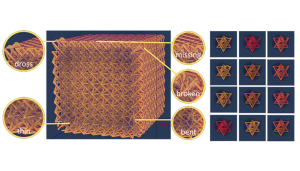

Open Data Initiative Adds X-Ray CT Dataset for Additive Manufacturing

The DSI’s Open Data Initiative (ODI) recently added a new project to its catalog: X-Ray CT Data of Additively Manufactured Octet Lattice Structures. Computed Tomography (CT) is a common imaging modality used at LLNL for non-destructive evaluation in a wide range of applications. For example, CT imaging can highlight defects in additively manufactured (AM) structures, which aids in fine-tuning subsequent iterations of development. This new addition to the ODI catalog consists of seven datasets: simulations containing models of x-ray CT simulations showing AM lattice structures with common...

LLNL Wins PacificVis Best Paper Award

Three LLNL computer scientists and University of Utah colleagues have won the 2022 PacificVis Best Paper award. Harsh Bhatia, Peer-Timo Bremer, and Peter Lindstrom co-authored “AMM: Adaptive Multilinear Meshes” (see the PDF and GitHub repository). AMM provides users with a resolution-precision-adaptive representation technique that reduces mesh sizes, thereby reducing the memory and storage footprints of large scientific datasets. The approach combines two solutions into one—reducing data precision and adapting data resolution—to improve the performance and efficiency of data processing and...

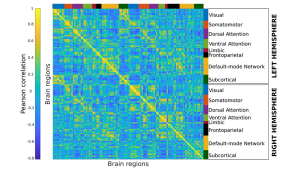

Open Data Initiative Adds Neuroimaging Dataset

The DSI’s Open Data Initiative recently added a new project to its catalog: Derived Products from HCP-YA fMRI. The Human Connectome Project–Young Adult (HCP-YA) dataset includes multiple neuroimaging modalities from 1,200 healthy young adults. These modalities include functional magnetic resonance imaging (fMRI), which measures the blood oxygenation fluctuations that occur with brain activity.

The fMRI data were recorded in multiple sessions per subject: during rest and a set of tasks, designed to evoke specific brain activity. Each fMRI run is a sequence of 3D volumes, and processing these...

Livermore WiDS Provides Forum for Women in Data Science

LLNL celebrated the 2022 global Women in Data Science (WiDS) conference on March 7 with its fifth annual regional event, featuring workshops, mentoring sessions, and a discussion with LLNL Director Kim Budil, the first woman to hold that role. The all-day event attracted women data scientists and students inside and outside the Lab, who gathered to share coding tips and swap stories of their experiences in growing their careers. Attendees tuned in to view presentations by LLNL women data scientists, engage in breakout sessions, and view a livestream of the global WiDS conference hosted by...

Register for Virtual WiDS Livermore on March 7

The annual Women in Data Science (WiDS) conference returns on Monday, March 7, which is International Women’s Day. LLNL will again host a regional event in conjunction with the worldwide conference. The all-day WiDS Livermore event will be entirely virtual and free. Everyone is welcome to attend. Registration is open until February 27.

Sponsored by the DSI and LLNL’s Office of Strategic Diversity and Inclusion Programs, WiDS Livermore will include a livestream of the Stanford conference and networking opportunities. Returning this year is the popular “speed mentoring” session, where mentees...