Projects in the AI3 steer key development efforts for LLNL's programs. AI3 will serve as the foundation for a cohesive view of AI for applied science, built upon LLNL’s cognitive simulation approach that combines state-of-the-art AI technologies with leading-edge supercomputing. The approach has sparked improvements in models for inertial confinement fusion (ICF), predictive biology, advanced manufacturing, and other areas. We also plan to develop and democratize large datasets to share with the broader scientific community, and to create open benchmarks to run on some of the world’s fastest supercomputers, testing the limits and potential of AI at the largest scale of science and technology.

Accelerated Material Discovery

HPC-scale AI for molecule development and synthesis

Generative AI models have made headway in suggesting new concepts with specified molecular properties for small molecules, peptides, and gene sequences, so we see an opportunity to explore that technology for materials science. High-performance technical applications in industry, energy, transportation, and medicine are dependent on a variety of materials. We are working developing large datasets for ML and applying AI on our supercomputers. Specifically, we're focusing on materials with complex synthesis and polymeric properties. In addition to creating public datasets drawing from public databases and data found in scientific literature, we are coming up with new generative modeling approaches to suggest molecular and complex polymer specifications (molecular structure, synthesis, processing) for targeted high-performance properties.

Unsupervised Learning & Design

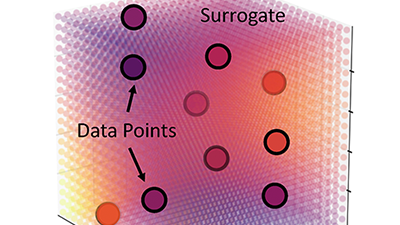

AI theory, software, and hardware for experiment analysis and design

Detailed simulation models rely on approximations that prevent them from perfectly predicting the real world. New AI techniques allow us to combine simulation and experimental data to improve these predictions. In this project, we're developing AI and statistical tools (i.e., theory and software) to improve our prediction models and leveraging computational hardware (e.g., cloud vs. on-premises solutions) that enables these new tools. This effort requires exploring questions about (1) the acceptable level of discrepancy between simulations and experiments while still producing reliable predictions, (2) statistical analysis of likelihoods and other factors, and (3) computational costs and tradeoffs. One of our goals is to produce a roadmap for AI-based model improvement that works across a range of scientific applications including predictive biology, climate science, and ICF experiments and simulations.

Automated Experiments

“Self-driving” capability to select experimental parameters

Executing experiments requires enormous efforts from scientists to perform routine analysis, compare experimental data with simulations, and develop the conditions for the next experiment. New facilities, like high-repetition-rate high-intensity lasers, produce huge amounts of data on timescales far too fast for humans to select the next experiment. We're exploring AI tools and computational hardware to combine simulation models and real-time experimental data with less human intervention. Such automated methods for experimental execution will allow facilities to “self-drive” (or experiments to “self-steer”) to collect more and higher quality data, deliver improved experimental performance, generate better predictive models, and increase exploration of the experimental regime. Applications in multiple scientific domains are possible. Our testbed will be a high-repetition-rate laser that executes experiments 3 times per second. The goal will be to develop very bright, high-energy proton beams tailored for specific science and medical applications, while improving our models of the underlying phenomena.

Digital Twinning

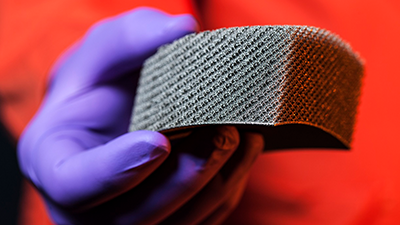

AI methods for improving production parts and processes

Advanced manufacturing production processes, including 3D printing, are complex and produce parts that require careful inspection to ensure they are high quality and defect-free. Working with industry partners, we're using new AI techniques to build detailed computer models of manufacturing processes and parts. These “digital twins” (i.e., a virtual representation of a real object) are used in conjunction with other ML techniques to optimize the process, catch existing errors, prevent new ones, and ensure the timely delivery of high-quality parts. This effort has implications for stockpile stewardship and other mission areas where advanced materials are needed.

Hardware Accelerators & Compute Power

Next-generation HPC resources to drive AI capabilities

The repetitive calculations necessary for AI algorithms can potentially be done far faster on cutting-edge computer chips. When paired with traditional supercomputers, AI accelerators can potentially speed up and improve simulations of fluid flow, drug design, nuclear fusion, and more. We're exploring new ways to run scientific simulations using these accelerators with an eye toward defining the next-generation of supercomputing platform. (Cerebras and SambaNova partnerships are recent examples.) Alongside this effort, we're looking ahead to the arrival of El Capitan, the Lab's first exascale HPC system coming online in 2024. capable of running more than 1 quintillion (1018) calculations per second, El Capitan will open up new possibilities for numerical simulation and design. We're developing a novel AI-driven approach to ICF design that takes full advantage of this. Our approach uses millions of simulations of fusion implosions to find an optimal digital design—essentially a "deepfake" physics design. Capitalizing on LLNL's recent experimental success generating over a millijoule of fusion energy, we will fire our “deepfake” digital design with the goal of bringing it to life at the Lab's National Ignition Facility.