Did you know we have a monthly newsletter? View past volumes and subscribe.

Machine learning tool fills in the blanks for satellite light curves

Feb. 13, 2024 -

When viewed from Earth, objects in space are seen at a specific brightness, called apparent magnitude. Over time, ground-based telescopes can track a specific object’s change in brightness. This time-dependent magnitude variation is known as an object’s light curve, and can allow astronomers to infer the object’s size, shape, material, location, and more. Monitoring the light curve of...

Will it bend? Reinforcement learning optimizes metamaterials

Dec. 13, 2023 -

Lawrence Livermore staff scientist Xiaoxing Xia collaborated with the Technical University of Denmark to integrate machine learning (ML) and 3D printing techniques. The effort naturally follows Xia’s PhD work in materials science at the California Institute of Technology, where he investigated electrochemically reconfigurable structures. In a paper published in the Journal of Materials...

For better CT images, new deep learning tool helps fill in the blanks

Nov. 17, 2023 -

At a hospital, an airport, or even an assembly line, computed tomography (CT) allows us to investigate the otherwise inaccessible interiors of objects without laying a finger on them. To perform CT, x-rays first shine through an object, interacting with the different materials and structures inside. Then, the x-rays emerge on the other side, casting a projection of their interactions onto a...

LLNL, University of California partner for AI-driven additive manufacturing research

Sept. 27, 2023 -

Grace Gu, a faculty member in mechanical engineering at UC Berkeley, has been selected as the inaugural recipient of the LLNL Early Career UC Faculty Initiative. The initiative is a joint endeavor between LLNL’s Strategic Deterrence Principal Directorate and UC national laboratories at the University of California Office of the President, seeking to foster long-term academic partnerships and...

High-performance computing, AI and cognitive simulation helped LLNL conquer fusion ignition

June 21, 2023 -

For hundreds of LLNL scientists on the design, experimental, and modeling and simulation teams behind inertial confinement fusion (ICF) experiments at the National Ignition Facility, the results of the now-famous Dec. 5, 2022, ignition shot didn’t come as a complete surprise. The “crystal ball” that gave them increased pre-shot confidence in a breakthrough involved a combination of detailed...

Visionary report unveils ambitious roadmap to harness the power of AI in scientific discovery

June 12, 2023 -

A new report, the product of a series of workshops held in 2022 under the guidance of the U.S. Department of Energy’s Office of Science and the National Nuclear Security Administration, lays out a comprehensive vision for the Office of Science and NNSA to expand their work in scientific use of AI by building on existing strengths in world-leading high performance computing systems and data...

Consulting service infuses Lab projects with data science expertise

June 5, 2023 -

A key advantage of LLNL’s culture of multidisciplinary teamwork is that domain scientists don’t need to be experts in everything. Physicists, chemists, biologists, materials engineers, climate scientists, computer scientists, and other researchers regularly work alongside specialists in other fields to tackle challenging problems. The rise of Big Data across the Lab has led to a demand for...

Data science meets fusion (VIDEO)

May 30, 2023 -

LLNL’s historic fusion ignition achievement on December 5, 2022, was the first experiment to ever achieve net energy gain from nuclear fusion. However, the experiment’s result was not actually that surprising. A team leveraging data science techniques developed and used a landmark system for teaching artificial intelligence (AI) to incorporate and better account for different variables and...

LLNL and SambaNova Systems announce additional AI hardware to support Lab’s cognitive simulation efforts

May 23, 2023 -

LLNL and SambaNova Systems have announced the addition of a spatial data flow accelerator into the Livermore Computing Center, part of an effort to upgrade the Lab’s CogSim program. LLNL will integrate the new hardware to further investigate CogSim approaches combining AI with high-performance computing—and how deep neural network hardware architectures can accelerate traditional physics...

Cognitive simulation supercharges scientific research

Jan. 10, 2023 -

Computer modeling has been essential to scientific research for more than half a century—since the advent of computers sufficiently powerful to handle modeling’s computational load. Models simulate natural phenomena to aid scientists in understanding their underlying principles. Yet, while the most complex models running on supercomputers may contain millions of lines of code and generate...

Supercomputing’s critical role in the fusion ignition breakthrough

Dec. 21, 2022 -

On December 5th, the research team at LLNL's National Ignition Facility (NIF) achieved a historic win in energy science: for the first time ever, more energy was produced by an artificial fusion reaction than was consumed—3.15 megajoules produced versus 2.05 megajoules in laser energy to cause the reaction. High-performance computing was key to this breakthrough (called ignition), and HPCwire...

LLNL staff returns to Texas-sized Supercomputing Conference

Nov. 23, 2022 -

The 2022 International Conference for High Performance Computing, Networking, Storage, and Analysis (SC22) returned to Dallas as a large contingent of LLNL staff participated in sessions, panels, paper presentations, and workshops centered around HPC. The world’s largest conference of its kind celebrated its highest in-person attendance since the start of the COVID-19 pandemic, with about 11...

LLNL researchers win HPCwire award for applying cognitive simulation to ICF

Nov. 17, 2022 -

The high performance computing publication HPCwire announced LLNL as the winner of its Editor’s Choice award for Best Use of HPC in Energy for applying cognitive simulation (CogSim) methods to inertial confinement fusion (ICF) research. The award was presented at the largest supercomputing conference in the world: the 2022 International Conference for High Performance Computing, Networking...

Understanding the universe with applied statistics (VIDEO)

Nov. 17, 2022 -

In a new video posted to the Lab’s YouTube channel, statistician Amanda Muyskens describes MuyGPs, her team’s innovative and computationally efficient Gaussian Process hyperparameter estimation method for large data. The method has been applied to space-based image classification and released for open-source use in the Python package MuyGPyS. MuyGPs will help astronomers and astrophysicists...

Scientific discovery for stockpile stewardship

Sept. 27, 2022 -

Among the significant scientific discoveries that have helped ensure the reliability of the nation’s nuclear stockpile is the advancement of cognitive simulation. In cognitive simulation, researchers are developing AI/ML algorithms and software to retrain part of this model on the experimental data itself. The result is a model that “knows the best of both worlds,” says Brian Spears, a...

LLNL team claims top AI award at international symbolic regression competition

Aug. 16, 2022 -

An LLNL team claimed a top prize at an inaugural international symbolic regression competition for an artificial intelligence (AI) framework they developed capable of explaining and interpreting real-life COVID-19 data. Hosted by the open source SRBench project at the 2022 Genetic and Evolutionary Computation Conference (GECCO), the competition had two tracks—synthetic and real-world—and...

Introduction to deep learning for image classification workshop (VIDEO)

July 6, 2022 -

In addition to its annual conference held every March, the global Women in Data Science (WiDS) organization hosts workshops and other activities year-round to inspire and educate data scientists worldwide, regardless of gender, and to support women in the field. On June 29, LLNL’s Cindy Gonzales led a WiDS Workshop titled “Introduction to Deep Learning for Image Classification.” The abstract...

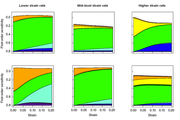

Building confidence in materials modeling using statistics

Oct. 31, 2021 -

LLNL statisticians, computational modelers, and materials scientists have been developing a statistical framework for researchers to better assess the relationship between model uncertainties and experimental data. The Livermore-developed statistical framework is intended to assess sources of uncertainty in strength model input, recommend new experiments to reduce those sources of uncertainty...

Summer scholar develops data-driven approaches to key NIF diagnostics

Oct. 20, 2021 -

Su-Ann Chong's summer project, “A Data-Driven Approach Towards NIF Neutron Time-of-Flight Diagnostics Using Machine Learning and Bayesian Inference,” is aimed at presenting a different take on nToF diagnostics. Neutron time-of-flight diagnostics are an essential tool to diagnose the implosion dynamics of inertial confinement fusion experiments at NIF, the world’s largest and most energetic...

Data Science Challenge welcomes UC Riverside

Oct. 11, 2021 -

Together with LLNL’s Center for Applied Scientific Computing (CASC), the DSI welcomed a new academic partner to the 2021 Data Science Challenge (DSC) internship program: the University of California (UC) Riverside campus. The intensive program has run for three years with UC Merced, and it tasks undergraduate and graduate students with addressing a real-world scientific problem using data...