Jan. 26, 2022

Happy New Year from the DSI Council

The start of a new year is an exciting time because of the opportunity to appraise our data science community’s myriad accomplishments as well as preview upcoming projects and events. Like other areas of LLNL, the DSI has adapted to evolving pandemic restrictions and workplace policies to prioritize safety.

We were pleased to sponsor and contribute to multiple activities in modified or virtual formats: our monthly seminar series, the fourth annual Women in Data Science (WiDS) Livermore event, a new career panel series inspired by WiDS, the Machine Learning for Industry Forum (ML4I), and a three-day workshop focused on artificial intelligence (AI) in healthcare. Many of these events will continue in 2022.

LLNL researchers saw their work recognized at top conferences in machine learning, computer vision, neural networks, AI, and computer science. We added new datasets to the Open Data Initiative and strengthened partnerships with existing collaborators. The new AI Innovation Incubator (AI3) launched, uniting experts from LLNL, industry, and academia to advance AI for large-scale scientific and commercial applications (see next story).

In the coming months, we look forward to welcoming students to Data Science Challenges with UC Merced and UC Riverside, along with the Data Science Summer Institute (DSSI) class of 2022. Our student programs combine two key activities: mentoring the next generation of data scientists and giving them practical experience with complex scientific challenges.

As the DSI leadership team, we’re always interested in supporting new projects that push data science techniques toward ever-more innovative solutions, and we celebrate the deep talent pool at LLNL who make our outreach and collaboration efforts possible. If adaptation is an appropriate word to describe the past year, so too is gratitude.

Thank you for subscribing to our newsletter. Feel free to send suggestions for future stories to datascience [at] llnl.gov (datascience[at]llnl[dot]gov).

Best wishes for a safe and happy new year,

LLNL Establishes AI Innovation Incubator

LLNL has established the AI Innovation Incubator (AI3), a collaborative hub aimed at uniting experts in artificial intelligence (AI) from LLNL, industry and academia to advance AI for large-scale scientific and commercial applications. LLNL has entered into new memoranda of understanding with Google, IBM, and NVIDIA, with plans to use the incubator to facilitate discussions and form future collaborations around hardware, software, tools, and utilities to accelerate AI for applied science. In addition, several existing projects will fall under the AI3 umbrella, including continued work with Hewlett Packard Enterprise and Advanced Micro Devices Inc. to demonstrate the power of AI and high-performance computing on the future exascale system El Capitan. This project focuses on innovative, AI-driven cognitive simulation and design optimization methods at unprecedented scales to devise novel approaches to inertial confinement fusion experiments at the National Ignition Facility. Other ongoing projects with AI accelerator/computing companies SambaNova Systems and Cerebras Systems and precision motion company Aerotech, Inc. will be further developed through AI3.

Video: Understanding Materials Behavior with Data Science

Computational chemist Rebecca Lindsey, PhD, explains how machine learning and data science techniques are used to develop diagnostic tools for stockpile stewardship, such as models that predict detonator performance. Lindsey also describes how atomistic simulations improve researchers’ understanding of the microscopic phenomena that govern the chemistry in materials under extreme conditions. For example, machine learning interatomic models have recovered structure, dynamics, and other characteristics with quantum accuracy and computational efficiency, enabling researchers to work in previously inaccessible problem spaces. Watch the video on YouTube (3:35).

Recent Research

A fast and accurate physics-informed neural network reduced order model with shallow masked autoencoder – Youngsoo Choi and David Widemann with colleagues from UC Berkeley

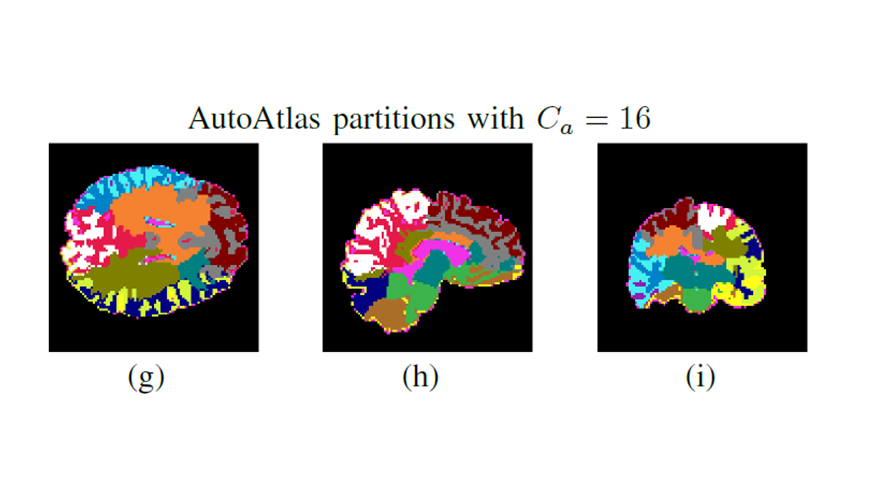

AutoAtlas: neural network for 3D unsupervised partitioning and representation learning – Aditya Mohan and Alan Kaplan (Image at left: AutoAtlas performs 3D unsupervised partitioning of input volume into multiple partitions while producing low-dimensional embedding features for each partition that are useful for metadata prediction.)

Continuous conditional generative adversarial networks for data-driven solutions of poroelasticity with heterogeneous material properties (preprint) – Youngsoo Choi with colleagues from Cornell University and Sandia and Los Alamos national labs

Mixture model framework for traumatic brain injury prognosis using heterogeneous clinical and outcome data (preprint) – Alan Kaplan, Qi Cheng, Aditya Mohan, and Shivshankar Sundaram with colleagues from the Medical College of Wisconsin, the Baylor College of Medicine, the Massachusetts General Hospital and Harvard Medical School, UC San Diego, and UC San Francisco

Reduced order models for Lagrangian hydrodynamics – Dylan Matthew Copeland, Siu Wun Cheung, Kevin Huynh, and Youngsoo Choi

Understanding the limits of unsupervised domain adaptation via data poisoning (preprint) – Bhavya Kailkhura with colleagues from IBM Research and Tulane University

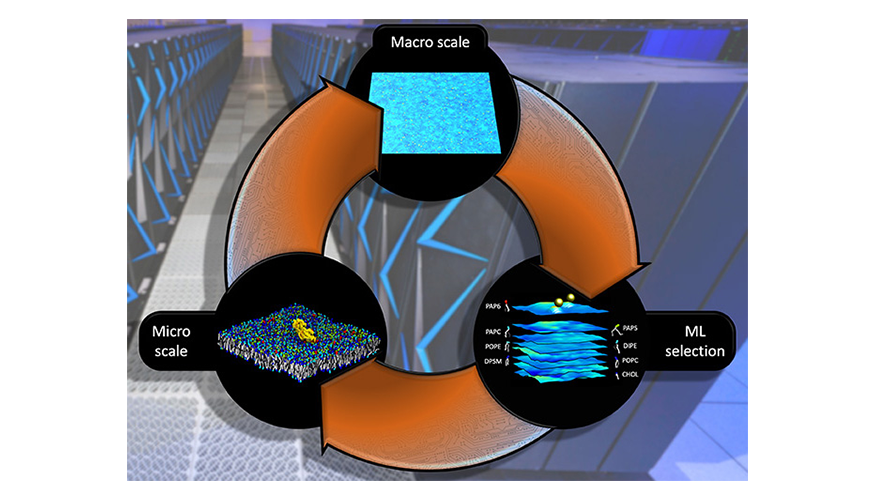

Unprecedented Multiscale Model of Protein Behavior

LLNL researchers and a multi-institutional team of collaborators have developed a highly detailed, machine learning–backed multiscale model revealing the importance of lipids to the signaling dynamics of RAS, a family of proteins whose mutations are linked to numerous cancers. Published by the Proceedings of the National Academy of Sciences, the paper details the methodology behind the Multiscale Machine-Learned Modeling Infrastructure (MuMMI), which simulates the behavior of RAS proteins on a realistic cell membrane, their interactions with each other and with lipids—organic compounds that help make up cell membranes—and the activation of signaling through the RAS interaction with RAF proteins, on a macro and molecular level. Researchers said the MuMMI framework represents a “fundamentally new technology in computational biology” and could be used to inform new experiments and improve scientists’ basic understanding of RAS protein binding.

The paper is part of an ongoing pilot project of the Joint Design of Advanced Computing Solutions for Cancer collaboration between the Department of Energy, the National Cancer Institute (NCI), and other organizations. It includes co-authors at the NCI’s Frederick National Laboratory for Cancer Research, who are applying some of insights gained from the model in lab experiments.

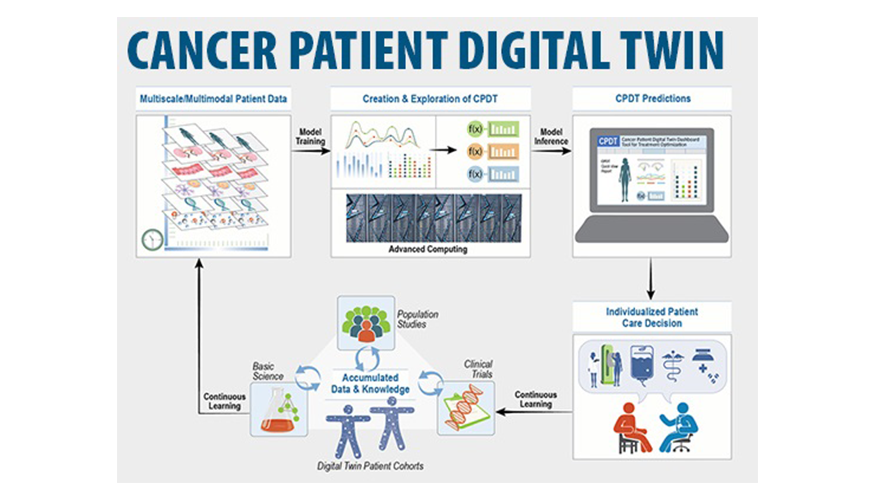

Digital Twin Framework for Predictive Oncology

A multi-institutional team, including an LLNL contributor, has proposed a framework for digital twin models of cancer patients that researchers say would create a “paradigm shift” for predictive oncology. Published in Nature Medicine, the proposed framework for Cancer Patient Digital Twins (CPDTs)—virtual representations of cancer patients using real-time data—combines high-performance computing modeling and simulation, model inference, and clinical data to make treatment predictions and individualized healthcare decisions for cancer patients. Clinicians could use CPDTs to perform virtual experiments, simulating the patient’s disease trajectory under different treatments. At each clinical visit, the predictions would be compared with real-life measurements to assess the digital twin’s performance and update it in a “continuous learning” loop to inform patient decision making.

Seminar Roundup

The DSI’s virtual seminar series included four presentations in the fall months of 2021. These talks were recorded and will be added to the video playlist soon.

Dr. Emilie Purvine of Pacific Northwest National Laboratory presented “Hypergraphs and Topology for Data Science” on October 13. She provided an overview of her team’s work to better represent the inherent complexity that data scientists and applied mathematicians grapple with when analyzing complex systems.

On November 15, Professor Nitin of UC Davis delivered a seminar titled “AI-Enabled Innovations in Validation of Sanitation and Detection of Pathogens.” The presentation focused on advances in verification and validation of sanitation of food contact surfaces and the role of AI methods in this context.

Professor Alan Edelman of the Massachusetts Institute of Technology presented “Julia, the Power of Language” on December 2. He discussed the application of Julia to domains such as climate science, materials design, simulations that require optimization, differential equations, machine learning, and uncertainty quantification.

Dr. Newsha Ajami of Stanford University gave the talk “Harnessing the Digital Revolution to Assessing Water Use Dynamics Under Climatic Stressors and Policy Regimes” on December 13. Using change point detection methodology, she examined various drivers that affect customer-level water demand based on emerging data sources.

Meet a Data Scientist

Computer scientist Michael Ward strives to improve the world in any way he can. “My motivation is often driven by making things better, whether fixing something that’s broken, providing a better experience for a user, or refining something to be more capable or stable,” he explains. Ward works in LLNL’s Global Security Computing Applications Division on data science projects involving geospatial intelligence, object detection, and imagery processing. Before joining the Lab in 2018, Ward built software for sales training, banking, inventory, and telecommunications. He also taught college-level computer science for four years, and says the experience of finding ways to convey complex ideas and technologies to others has come in handy at the Lab. Ward earned bachelor’s and master’s degrees from the University of South Alabama.

Save the Date: WiDS on March 7

For the fifth consecutive year, Women in Data Science (WiDS) Livermore will return (virtually) on Monday, March 7 to coincide with the annual worldwide conference hosted by Stanford University. New this year for Livermore will be workshops and a tie-in with the Computing hackathon. The event will also feature a “fireside chat” conversation between Kim Budil, LLNL’s first woman director, and Jessie Gaylord, leader of LLNL’s Global Security Computing Applications Division.

The next DSI newsletter volume will include the registration link, agenda, and other details about how to participate. Marisa Torres serves as the Lab’s WiDS ambassador and leads the organization team of Holly Auten, Jennifer Bellig, Cindy Gonzales, Anna Poon, Amar Saini, and Mary Silva. WiDS Livermore is free, open to everyone, and co-sponsored by the DSI and the Lab’s Office of Strategic Diversity and Inclusion Programs.

Student Internship Application Deadline

A new year ushers in a new cycle of summer internships. The Data Science Summer Institute (DSSI) application window is open until January 31. This summer’s program will run for 12 weeks and is open to both undergraduate and graduate students. Visit the DSSI website for information about how to apply, including a list of FAQs.