Aug. 25, 2021

New Bayesian ML Code Released

A new Bayesian machine learning (ML) code, MuyGPyS (pronounced my-jee-pies) has been developed as part of a Laboratory Directed Research and Development Strategic Initiative to address needs for native uncertainty quantification in ML predictions, learning with bounded training times, support for combining ML and model-based Bayesian inference frameworks, and extending the data sizes allowable in Gaussian process (GP) models.

The MuyGPyS code and algorithm offer best-in-class performance on community GP regression benchmarks, as well as image classification performance competitive with or exceeding the accuracy of state-of-the-art convolutional neural networks under certain circumstances. MuyGPyS has been applied at LLNL for space domain awareness problems, cosmology projects, simulation surrogate modeling, and Open Data Initiative (ODI) examples (see next story). Application to reinforcement learning is in progress. Additionally, LLNL’s summer Data Science Challenge with two University of California campuses (Merced and Riverside) leveraged this code.

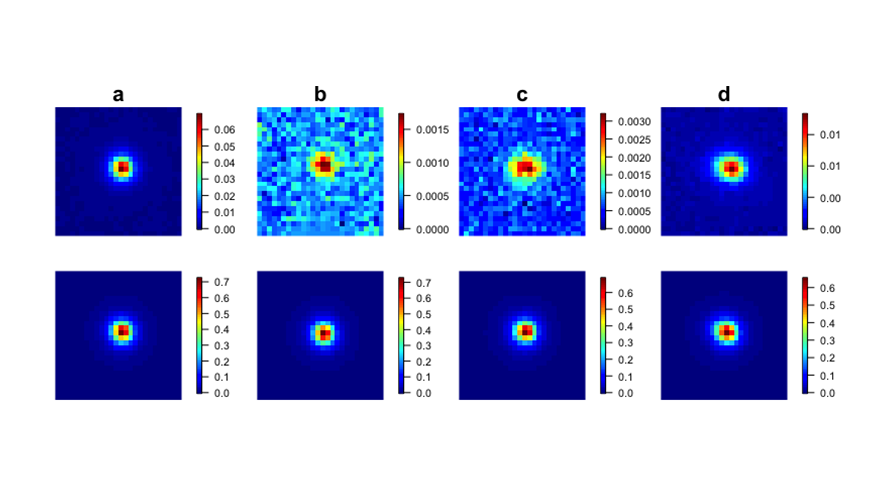

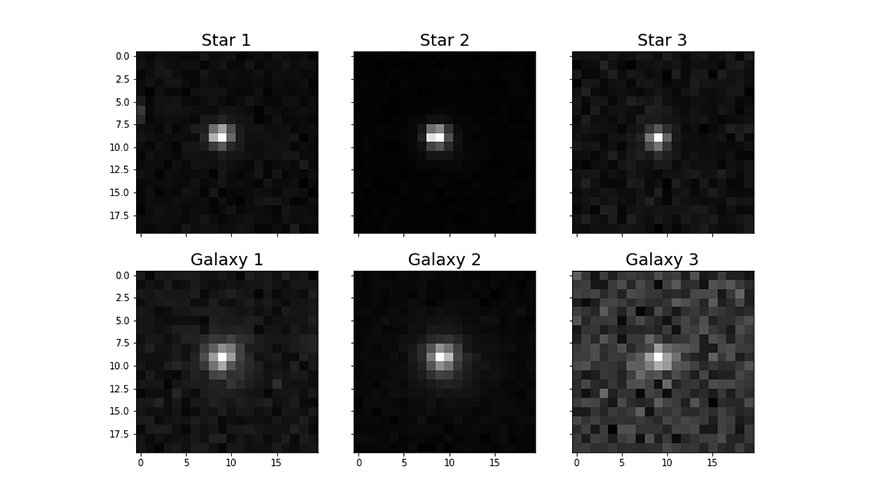

Principal investigator and DSI Council member Michael Schneider stated, “We encourage application of the MuyGPyS code in many circumstances where neural networks might be considered to solve a modeling problem. Planned development of the code will continue to increase the range of data sets and modeling scenarios where MuyGPyS could be expected to give state-of-the-art performance. With the open-source code release on GitHub, we look forward to growing the community of users and contributors.” Joining Schneider on the project are Bob Armstrong, Jason Bernstein, James Buchanan, Ryan Dana, Iméne Goumiri, Amanda Muyskens, Ben Priest, and Kerianne Pruett. (Image: Examples of image data—normalized i band and point spread functions in star and galaxy observations—analyzed with MuyGPyS benchmarks.)

Open Data Initiative Expands with Astronomy Project

The DSI’s Open Data Initiative has added a new project to its catalog: Zwicky Transient Facility (ZTF) Image Classification Using MuyGPyS. The ZTF is a wide-field astronomical imaging survey seeking to answer many of the universe’s mysteries, by frequently imaging billions of objects in our night sky. LLNL scientists have developed a computationally efficient GP hyperparameter estimation method, MuyGPs, that can be applied to image classification tasks. This project demonstrates the use of MuyGPyS (see previous story), and the code repository contains various descriptive notebooks covering everything from curating a dataset of real stars and galaxies, to obtaining a classification accuracy.

Virtual Seminar: AI and Biomedical Data Privacy

For the DSI’s July 28 virtual seminar, Dr. Bradley Malin presented “Artificial Intelligence in Support of Biomedical Data Privacy.” His talk reviewed several attacks on biomedical data as they have evolved over the past several decades, then described a new approach to assessing data privacy risk that builds on computational economic perspectives of risk assessment and artificial data generation methods. Dr. Malin is the Accenture Professor of Biomedical Informatics, Biostatistics, and Computer Science at Vanderbilt University. His research draws upon methodologies in computer science, biomedical science, and public policy to innovate novel computational techniques.

LLNL Hosts Next-Gen AI for Nonproliferation Workshop

NNSA’s Office of Defense Nuclear Nonproliferation Research and Development, known as NA-22, sponsors a “Next-Gen AI” workshop series that focuses on mission-driven requirements, challenges, and solutions. With three virtual events since September 2020, the workshops bring together multidisciplinary experts from the DOE, NNSA, U.S. military agencies and intelligence community, academic institutions, and several national laboratories.

LLNL hosted more than 100 attendees for the third workshop on July 28–29. Eddy Banks, who serves as deputy division leader for LLNL’s Global Security Computing Applications Division, co-organized the event alongside Angela Sheffield, senior program manager for AI and Data Science at NA-22. Titled “AI-Enabled Information Fusion for Discovery and Decision Intelligence,” the workshop’s key message was one of fusion—that is, working on multiple research and development activities collaboratively and in parallel, not in isolation. Presentations and panel discussions focused on three aspects of AI in the DNN context: dynamic, distributed computing and data management; AI fusion frameworks and algorithms; and discovery and decision making under uncertainty.

Research Highlights

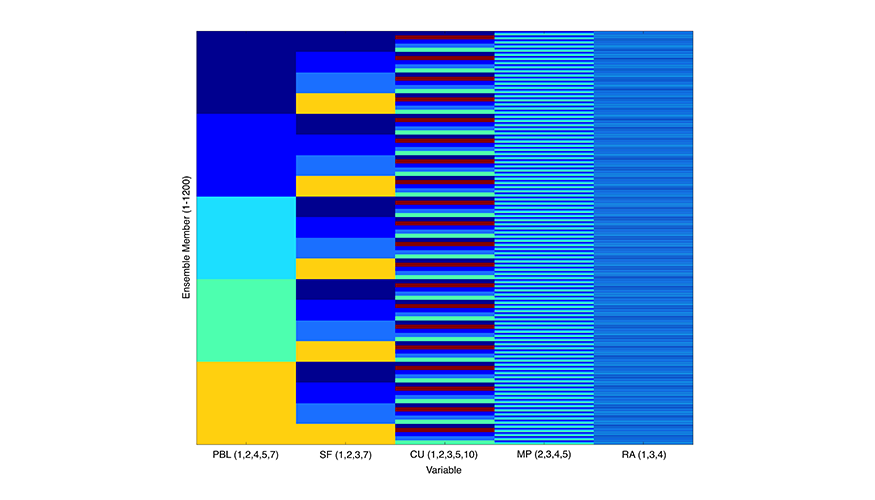

- ML for weather and atmospheric transport models. In the event of an accidental or intentional hazardous material release in the atmosphere, researchers often run physics-based atmospheric transport and dispersion models to predict the extent and variation of the contaminant spread. In a paper recently published in Atmosphere, scientists from LLNL and Scripps Institution of Oceanography present an ML-based method that can be used to quickly emulate spatial deposition patterns from a multi-physics ensemble of dispersion simulations.

- A novel approach to representation learning. To enable powerful, data-efficient representation learning, a research team from LLNL, Carnegie Mellon University, and PathAI proposes a new approach for learning highly expressive GP kernels with small amounts of labeled data by mapping data points to probability distributions in a latent space with a probabilistic neural network. The paper was accepted to the 37th Conference on Uncertainty in Artificial Intelligence (UAI 2021) held in late July.

- A new era in TBI diagnosis and treatment. Traumatic brain injuries (TBIs) affect millions of people each year, whether from car accidents, sports injuries, or on the battlefield. The Defense and Veterans Brain Injury Center reported nearly 414,000 TBIs among U.S. service members worldwide between 2000 and late 2019—yet treatment and, in many cases, even diagnosis remain elusive. The DSI website recently published details about LLNL’s involvement in the interdisciplinary TRACK-TBI project as well as the MaPPeRTrac brain tractography workflow, an open-source technology to simplify and accelerate neuroimaging research.

New Academic Partnership

Through an engagement with Purdue University’s The Data Mine learning and research community, LLNL and Purdue are partnering to speed up drug design using computational tools under the Accelerating Therapeutic Opportunities in Medicine (ATOM) project. Over two recent semesters, LLNL bioinformatics scientist and ATOM researcher Jonathan Allen mentored 20 undergraduate and graduate students and 2 teaching assistants, introducing them to computationally driven drug discovery and designing predictive models for drug candidates. The students met on a twice-weekly basis and worked in teams to evaluate virtual compounds for drug-like potential using ATOM-developed open source software.

Scientific ML for Non-Experts

On July 26, DSI Council member Peer-Timo Bremer presented a virtual talk titled “Scientific Machine Learning for Non-Experts: Intuitions, Opportunities, and Challenges” to an LLNL audience. He discussed some of the intuitions behind ML as applied to scientific problems and why many people are excited by the opportunities it represents. Using several ongoing projects as examples, he also introduced some common concepts in scientific ML and outlined open challenges and common pitfalls. As part of the Computing Directorate’s “Comp 101” speaker series, the goal of Bremer’s presentation was to help attendees develop an intuition to what is possible and what is too good to be true in using ML to advance LLNL's missions.

Bremer is a project leader in LLNL’s Center for Applied Scientific Computing and serves as the ML point of contact for the Advanced Scientific Computing Research program. He holds a shared appointment as the Associate Director for Research and the Center for Extreme Data Management, Analysis, and Visualization at the University of Utah.

Inaugural Industry Forum

LLNL’s first-ever Machine Learning for Industry Forum (ML4I) was held on August 10–12. The virtual event was sponsored by the High Performance Computing Innovation Center and the DSI. Participants and attendees came from industry, research institutions, and academia. The schedule featured daily keynote addresses from AI/ML leaders at Ford Motor Company, UC Berkeley’s Robot Learning Lab, and the DOE’s Artificial Intelligence Technology Office. Additional details will be in the next newsletter volume.

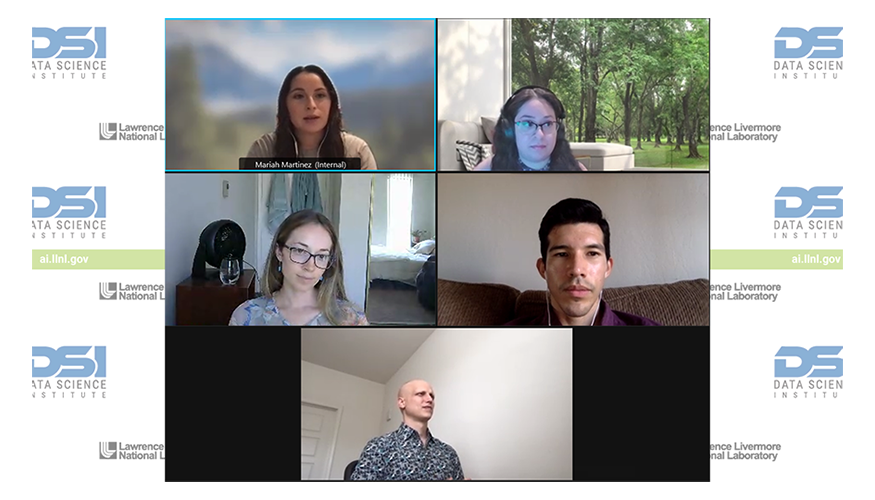

Career Panel Spotlights Former Interns

The DSI’s new career panel series continued on August 10 with its third installment: “Former Interns Tell All!” The panel consisted of former student interns who are now full-time staff in the Computing and Engineering Directorates. Shown here clockwise from top left, Mariah Martinez, Mary Silva (moderator), Jose Cadena, Brian Bartoldson, and Jacquelyn First discussed how their internships helped pave the way for staff positions, offered advice for interviewing and networking remotely, and answered questions from the audience. Martinez, who interned at LLNL for multiple years, stated, “My mentor introduced me to new technologies that I wouldn’t have been exposed to otherwise. I really enjoyed coming to work, learning something new, and getting to know people. It’s never going to be a dull day.”

Students Wrap Up Internships

The Data Science Summer Institute (DSSI) class of 2021 presented their projects virtually during LLNL’s annual Summer SLAM! event on August 5. Mentors and other attendees asked questions about the students’ research and methods on topics such as graph clustering with deep learning, supervised learning in symbolic regression, multilevel graph neural networks, spatiotemporal predictions, natural language processing, and more. Since beginning their internships in late May and early June, the DSSI students participated in a range of activities including seminars, workshops, mentoring sessions, and virtual tours of LLNL facilities.