Learning about learning: reading group discusses advancements in AI

Teams from around Lawrence Livermore conduct research using artificial intelligence, and the Data Science Institute’s (DSI’s) Machine Learning Reading Group serves as a resource for employees to keep one another apprised of developments in this ever-changing field.

The group meets weekly to share and discuss new literature on machine learning and deep learning, subsets of artificial intelligence that the Lab uses to, for example, review data from around the world for indicators of nuclear proliferation and develop methods for predicting cancer patient outcomes.

“The fields are evolving very quickly, and one person alone can’t keep up with all the new developments,” according to Barry Chen, a principal research engineer in Engineering, who started the group in 2014.

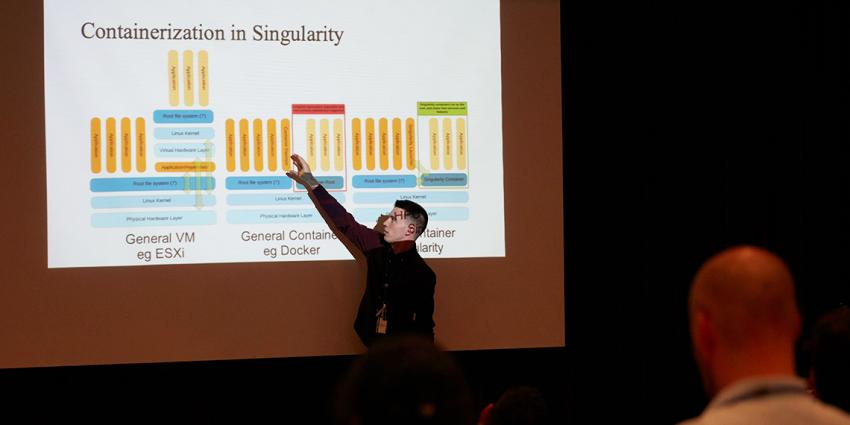

At these free-flowing gatherings, held Tuesday afternoons and open to anyone at the Lab, one volunteer reviews an article, often with a PowerPoint presentation, and others can jump in with questions or comments.

At a recent meeting, T. Nathan Mundhenk, a computational engineer in the Computational Engineering Division, presented an article on adversarial perturbations, or methods intentionally designed to mislead machine learning models for detecting images. The researchers changed just a few pixels in a picture of a rabbit, and the neural network—a mathematical model, used in deep learning and inspired by the human brain, consisting of layers of interconnected artificial neurons—interpreted it as a rhinoceros instead.

“The neural networks are so much more brittle to adversarial attacks than I think some people would have expected a priori,” Mundhenk said. “If we’re going to use these for security purposes, we’d better sort that out.”

Machine Learning at the Lab

Machine learning enables a computer to improve its performance by incorporating new data into a model without explicit human programming. Through deep learning, a sub-field of machine learning, researchers have been able to create computer systems that can achieve human-level performance on a variety of tasks once thought to be too difficult for machines to automate—for example, object recognition for self-driving cars, automatic speech recognition, and even complex strategy games (a deep learning system beat the world champion in the board game Go in 2017).

The Lab leverages machine learning and deep learning in several ways. Mundhenk cited the Global Security directorate’s use of deep learning for assessing satellite data as well as the Weapons and Complex Integration directorate’s applications for analyses of materials manufacturing. Also, Mundhenk worked on a project for the National Ignition Facility that employed a deep learning recognition program to detect defects on lenses of its lasers; this enabled one engineer rather than multiple team members to monitor the lenses, ultimately saving effort, time, and funds. He noted that, given the high computational cost of deep learning, the availability of supercomputers on site positions the Lab well for conducting its research.

Brenda Ng, group leader of the Machine Learning Group in the Computational Engineering Division, often presents papers and sometimes moderates sessions when others are leading. She works on deep learning projects that examine multimodal data and can possibly reveal some kind of hidden state about the system being observed. Ng cited an example in health care.

“Whenever you go to the doctor, you generate data in the form of doctors’ notes, and that’s in text,” Ng said. “If you’re seriously ill, you might even get something like CTs or MRI scans, and those are in the forms of images.” Deep learning, according to Ng, can be applied by combining these different data sources and inferring the state of your health.

Leaders of recent meetings have presented papers on a range of subjects, including language modeling, computer vision, approaches to doctored videos, and face and object processing in the human brain.

“What you’ll find is that the machine learning tools developed for one particular application can be highly relevant for your own application area,” Chen said. “That’s one of the reasons why we have the reading group—to encourage people to pick out new ideas that they might find useful for their own problem.”

The discussions can also provide background on the field for those entering the field. Cindy Gonzales began attending meetings in the fall of 2018 when she was working at the Lab as an administrative assistant and a colleague mentioned the group to her; she noted that keeping up with the discussions was initially difficult, as much of the material was new to her. She joined the recently created Data Science Immersion Program at the Lab and began attending meetings more regularly, and at a meeting in March 2019, she found she was fully following the discussion.

“Submerging yourself into the field and listening to the current research is a huge help in understanding machine learning practices and techniques,” said Gonzales, who is now a data scientist in the Global Security Computing Applications Division and who recently led a session, with an overview of the 2019 Conference on Computer Vision and Pattern Recognition in Long Beach, California, and a discussion on an article about loss functions.

Speakers may volunteer, or they may be asked to lead. They discuss topics of their own choosing, and summaries are posted on the group’s internal web page ahead of the session.

Origins of the Group

Chen has been at Lawrence Livermore since 2005. He got the group going as the Lab was beginning a new project involving deep learning and needed to get up to speed on the subject.

“By 2014, deep learning was really on the rise, and so we said we needed to have a forum where not just one person would read all the papers,” Chen said. “We wanted to do this as a team.”

Chen noted that one of the reasons the group was stood up was to encourage an open exchange of ideas and that “If you have a setting where questions and answers fly back and forth quickly, new questions that you didn’t think about or somebody else hasn’t been thinking about can pop up and be immediately discussed.”

This community began as a kind of study group, according to Ng, who has participated since its launch. It has gotten to a much more advanced level, she noted, and discussion leaders now present on state-of-the-art topics.

Mundhenk, whose involvement has increased over the past few months as Chen has gotten busier with other projects, foresees, for the group, a continued trend away from the more general machine learning–based literature and toward deep learning themes.

Another, related machine learning field is reinforcement learning, and in January 2019, research scientist Brenden Peterson launched a reading group at the Lab focused on this.

Growing Network

Gonzales lauded the supportive atmosphere and open discussions at the meetings, noting, “I think this is one of the best places to go if you are interested in venturing into the machine learning space.”

Anyone interested can attend on a drop-in basis and does not even need to work on machine learning, and Chen noted, “Even if you just have a minor interest, just come and feel free to ask questions, because that’s what we’re there for.”

Ng acknowledged that an employee new to the field might have difficulty following a session’s discussion but suggested that attending a deep learning course that the DSI and Computing and Engineering Directorates offer, or the introductory courses the DSI sponsors in the summer, could help. She also noted that the informal structure of the meetings and the diversity of projects people work on can provide chances for growth.

“Even though the group is first and foremost a learning opportunity,” Ng said, “people should realize it’s a great networking opportunity, and new employees especially should check it out.”

For more information on the Machine Learning Reading Group, contact datascience [at] llnl.gov (subject: MLRG) (the DSI.)