Did you know we have a monthly newsletter? View past volumes and subscribe.

Machine learning tool fills in the blanks for satellite light curves

Feb. 13, 2024 -

When viewed from Earth, objects in space are seen at a specific brightness, called apparent magnitude. Over time, ground-based telescopes can track a specific object’s change in brightness. This time-dependent magnitude variation is known as an object’s light curve, and can allow astronomers to infer the object’s size, shape, material, location, and more. Monitoring the light curve of...

Will it bend? Reinforcement learning optimizes metamaterials

Dec. 13, 2023 -

Lawrence Livermore staff scientist Xiaoxing Xia collaborated with the Technical University of Denmark to integrate machine learning (ML) and 3D printing techniques. The effort naturally follows Xia’s PhD work in materials science at the California Institute of Technology, where he investigated electrochemically reconfigurable structures. In a paper published in the Journal of Materials...

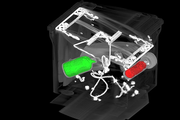

For better CT images, new deep learning tool helps fill in the blanks

Nov. 17, 2023 -

At a hospital, an airport, or even an assembly line, computed tomography (CT) allows us to investigate the otherwise inaccessible interiors of objects without laying a finger on them. To perform CT, x-rays first shine through an object, interacting with the different materials and structures inside. Then, the x-rays emerge on the other side, casting a projection of their interactions onto a...

LLNL, University of California partner for AI-driven additive manufacturing research

Sept. 27, 2023 -

Grace Gu, a faculty member in mechanical engineering at UC Berkeley, has been selected as the inaugural recipient of the LLNL Early Career UC Faculty Initiative. The initiative is a joint endeavor between LLNL’s Strategic Deterrence Principal Directorate and UC national laboratories at the University of California Office of the President, seeking to foster long-term academic partnerships and...

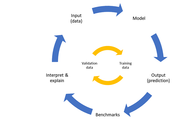

Consulting service infuses Lab projects with data science expertise

June 5, 2023 -

A key advantage of LLNL’s culture of multidisciplinary teamwork is that domain scientists don’t need to be experts in everything. Physicists, chemists, biologists, materials engineers, climate scientists, computer scientists, and other researchers regularly work alongside specialists in other fields to tackle challenging problems. The rise of Big Data across the Lab has led to a demand for...

LLNL researchers win HPCwire award for applying cognitive simulation to ICF

Nov. 17, 2022 -

The high performance computing publication HPCwire announced LLNL as the winner of its Editor’s Choice award for Best Use of HPC in Energy for applying cognitive simulation (CogSim) methods to inertial confinement fusion (ICF) research. The award was presented at the largest supercomputing conference in the world: the 2022 International Conference for High Performance Computing, Networking...

Understanding the universe with applied statistics (VIDEO)

Nov. 17, 2022 -

In a new video posted to the Lab’s YouTube channel, statistician Amanda Muyskens describes MuyGPs, her team’s innovative and computationally efficient Gaussian Process hyperparameter estimation method for large data. The method has been applied to space-based image classification and released for open-source use in the Python package MuyGPyS. MuyGPs will help astronomers and astrophysicists...

LLNL team claims top AI award at international symbolic regression competition

Aug. 16, 2022 -

An LLNL team claimed a top prize at an inaugural international symbolic regression competition for an artificial intelligence (AI) framework they developed capable of explaining and interpreting real-life COVID-19 data. Hosted by the open source SRBench project at the 2022 Genetic and Evolutionary Computation Conference (GECCO), the competition had two tracks—synthetic and real-world—and...

Introduction to deep learning for image classification workshop (VIDEO)

July 6, 2022 -

In addition to its annual conference held every March, the global Women in Data Science (WiDS) organization hosts workshops and other activities year-round to inspire and educate data scientists worldwide, regardless of gender, and to support women in the field. On June 29, LLNL’s Cindy Gonzales led a WiDS Workshop titled “Introduction to Deep Learning for Image Classification.” The abstract...

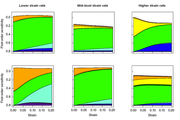

Building confidence in materials modeling using statistics

Oct. 31, 2021 -

LLNL statisticians, computational modelers, and materials scientists have been developing a statistical framework for researchers to better assess the relationship between model uncertainties and experimental data. The Livermore-developed statistical framework is intended to assess sources of uncertainty in strength model input, recommend new experiments to reduce those sources of uncertainty...

Summer scholar develops data-driven approaches to key NIF diagnostics

Oct. 20, 2021 -

Su-Ann Chong's summer project, “A Data-Driven Approach Towards NIF Neutron Time-of-Flight Diagnostics Using Machine Learning and Bayesian Inference,” is aimed at presenting a different take on nToF diagnostics. Neutron time-of-flight diagnostics are an essential tool to diagnose the implosion dynamics of inertial confinement fusion experiments at NIF, the world’s largest and most energetic...

Data Science Challenge welcomes UC Riverside

Oct. 11, 2021 -

Together with LLNL’s Center for Applied Scientific Computing (CASC), the DSI welcomed a new academic partner to the 2021 Data Science Challenge (DSC) internship program: the University of California (UC) Riverside campus. The intensive program has run for three years with UC Merced, and it tasks undergraduate and graduate students with addressing a real-world scientific problem using data...

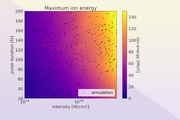

Laser-driven ion acceleration with deep learning

May 25, 2021 -

While advances in machine learning over the past decade have made significant impacts in applications such as image classification, natural language processing and pattern recognition, scientific endeavors have only just begun to leverage this technology. This is most notable in processing large quantities of data from experiments. Research conducted at LLNL is the first to apply neural...

A winning strategy for deep neural networks

April 29, 2021 -

LLNL continues to make an impact at top machine learning conferences, even as much of the research staff works remotely during the COVID-19 pandemic. Postdoctoral researcher James Diffenderfer and computer scientist Bhavya Kailkhura, both from LLNL’s Center for Applied Scientific Computing, are co-authors on a paper—“Multi-Prize Lottery Ticket Hypothesis: Finding Accurate Binary Neural...

Winter hackathon highlights data science talks and tutorial

March 24, 2021 -

The Data Science Institute (DSI) sponsored LLNL’s 27th hackathon on February 11–12. Held four times a year, these seasonal events bring the computing community together for a 24-hour period where anything goes: Participants can focus on special projects, learn new programming languages, develop skills, dig into challenging tasks, and more. The winter hackathon was the DSI’s second such...

Novel deep learning framework for symbolic regression

March 18, 2021 -

LLNL computer scientists have developed a new framework and an accompanying visualization tool that leverages deep reinforcement learning for symbolic regression problems, outperforming baseline methods on benchmark problems. The paper was recently accepted as an oral presentation at the International Conference on Learning Representations (ICLR 2021), one of the top machine learning...

Ana Kupresanin featured in FOE alumni spotlight

March 10, 2021 -

LLNL's Ana Kupresanin, deputy director of the Center for Applied Scientific Computing and member of the Data Science Institute council, was recently featured in a Frontiers of Engineering (FOE) alumni spotlight. Kupresanin develops statistical and machine learning models that incorporate real-world variability and probabilistic behavior to quantify uncertainties in engineering and physics...

'Self-trained' deep learning to improve disease diagnosis

March 4, 2021 -

New work by computer scientists at LLNL and IBM Research on deep learning models to accurately diagnose diseases from X-ray images with less labeled data won the Best Paper award for Computer-Aided Diagnosis at the SPIE Medical Imaging Conference on February 19. The technique, which includes novel regularization and “self-training” strategies, addresses some well-known challenges in the...

CASC research in machine learning robustness debuts at AAAI conference

Feb. 10, 2021 -

LLNL’s Center for Applied Scientific Computing (CASC) has steadily grown its reputation in the artificial intelligence (AI)/machine learning (ML) community—a trend continued by three papers accepted at the 35th AAAI Conference on Artificial Intelligence, held virtually on February 2–9, 2021. Computer scientists Jayaraman Thiagarajan, Rushil Anirudh, Bhavya Kailkhura, and Peer-Timo Bremer led...

Lab researchers explore ‘learn-by-calibration’ approach to deep learning to accurately emulate scientific process

Feb. 10, 2021 -

An LLNL team has developed a “Learn-by-Calibrating” method for creating powerful scientific emulators that could be used as proxies for far more computationally intensive simulators. Researchers found the approach results in high-quality predictive models that are closer to real-world data and better calibrated than previous state-of-the-art methods. The LbC approach is based on interval...